Have you ever had a sound replay in your mind, as if stuck on a loop? Whether it’s a catchy song or a phone ringtone, these auditory sensations - or earworms - continue internally in our brains even when there is no external sound present. This is, in fact, a product of our imagination, or what is known in scientific terms as mental imagery. A recent study published in the journal Cerebral Cortex delves into auditory mental imagery and the cause of the earworm phenomenon, revealing its origins in the brain’s motor system and memory system.

In auditory imagery, the auditory cortex becomes activated, creating the perception of sound in the mind, despite the absence of any real sound input. “Neuroscientists have traditionally focused on how this mechanism influences information processing and behavior,” explained Associate Professor of Neural and Cognitive Sciences Tian Xing, who led the research. “Our study, however, wants to answer the fundamental questions of the origin and functioning of auditory imagery.”

Understanding the mechanism of imagery has long stumped cognitive neuroscientists. Tian’s team proposed a hypothesis based on previous empirical evidence that imagery can be generated from two sources - motor and memory systems. They set off to test their hypothesis through a novel dual-imagery paradigm that maximized the differences between motor-based and memory-based imagery. Participants were instructed to imagine human speech or natural sounds.

In the imagery experiment, participants watched a muted video and imagined the sounds

In the imagery experiment, participants watched a muted video and imagined the sounds

In the experiment, participants watched a muted video of objects in motion, such as a basketball bouncing on the floor, and were asked to imagine which sounds should accompany the footage, for example a repeated “whomp” sound of the basketball. In another scenario, participants were shown text superimposed on videos, and asked to imagine themselves saying the words, without moving their lips. Functional magnetic resonance imaging (fMRI) was used to capture participants’ brain activity throughout the tasks.

The findings revealed that both types of imagery activated the auditory cortex without any sounds, with the former originating from the memory-based system and the latter from the motor-based system. In both cases, participants “heard” the imagined sounds and speeches in their minds without any actual sound being made.

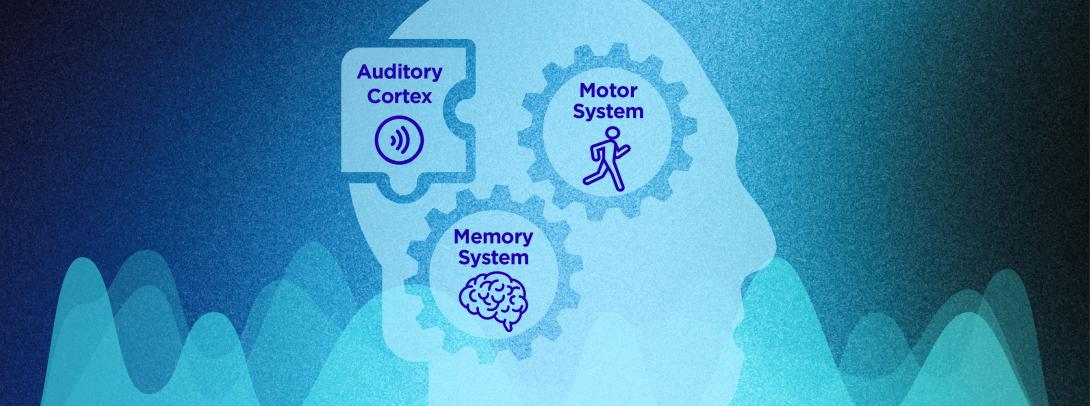

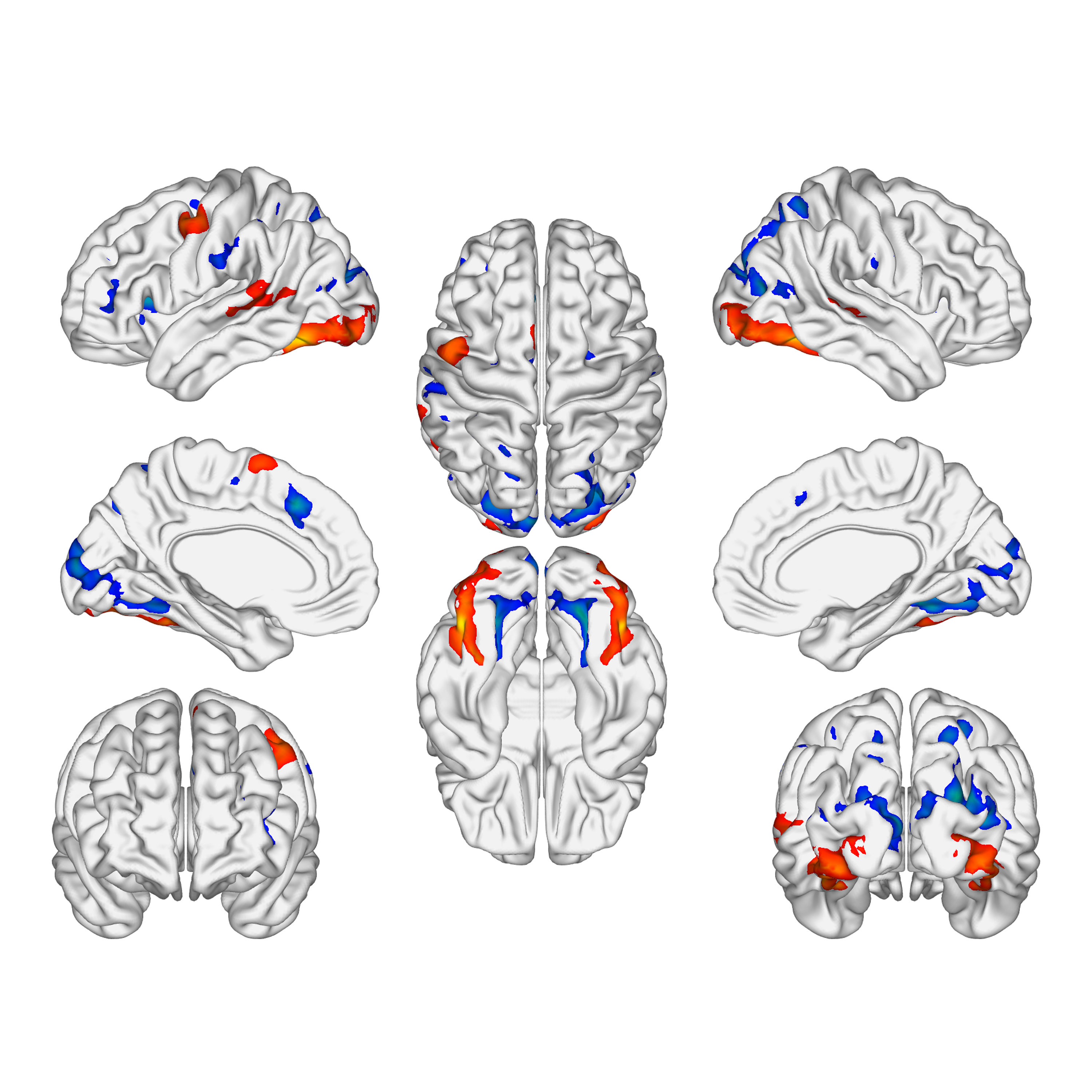

Brain areas activation during two types of imagery capturing by fMRI

Brain areas activation during two types of imagery capturing by fMRI

Through analysis, the team was able to map the signal pathways in the brain during the imagery process. “We applied the multivoxel pattern analysis (MVPA) and Dynamic Causal Modeling (DCM) methods to analyze the massive fMRI data gathered,” said Chu Qian, NYU Shanghai Class of 2022 alumnus, first author of the study. “MVPA is used to reveal imagery contents based on differential brain activities, and DCM is a statistical approach that allows us to discover the interactions and causal relationships between different brain areas based on measured brain activity data.” The team concluded that an intermediate stage, termed somatosensory prediction, is involved in the realization of motor-based imagery. These results, which supported the team’s hypotheses, provide the first neural evidence showcasing the mechanism of imagery as a fundamental cognitive function in humans.

The study represents the collaborative efforts of multiple generations of members of Speech, Language, and Neuroscience Group (SLANG), a joint research team co-led by Tian under the NYU-ECNU Institute of Brain and Cognitive Science at NYU Shanghai. First author Chu Qian joined Tian’s research team as a sophomore at NYU Shanghai, and is now pursuing a doctoral degree at the Max Planck-University of Toronto Centre for Neural Science and Technology. Other authors of the study included Ma Ou, a 2020 Master's degree graduate from the NYU Shanghai - ECNU Joint Graduate Training Program (N.E.T.) and Hang Yuqi, a former research intern with the SLANG group.

Redefining the framework of cognitive neuroscience is a key objective of the SLANG group. The study, which reveals the ability of the motor and memory systems to generate imagery - a high-level cognitive function - aligns with SLANG’s mission to incorporate action into the cognitive process, prompting a reevaluation of the role of action in cognitive functions.

Tian said the study has potential implications for advancements in brain-computer interface (BCI) technology. “Currently there are already quite a number of exciting BCI applications such as prosthetic control, which enable individuals with limb loss or paralysis to control prosthetic limbs using their brain signals,” he said. “In these applications, the brain generates signals that reflect specific intentions or commands, which are then decoded by a computer to implement corresponding actions or movements.” The study, he explained, indicates the origins and pathways that generate these signals. “Once the complete mechanism is understood, more accurate and efficient BCI innovations based on the entire dynamic neural network systems might be achieved,” Tian said.

Chu added that the research team hopes the findings could offer insights into the treatment of auditory hallucinations, where individuals perceive sounds that aren’t there. Current neural evidence suggests that such activation in the auditory cortex could result from impairment of the motor and memory systems. “Most research in the field so far tends to analyze the motor and memory systems separately,” he said, “but according to our study, combining the two directions together might point to a new direction for treatment approaches.”